- Crypto Payroll Systems: The Future of Employee Compensation?

- Proven Debt Management Strategies 2025: Slash Your Debt and Boost Your Credit Score with Proven Tips

- Guide to buying a home in 2025: How Young Adults Can Move from Renter to Homeowner

- Plan Your Dream Retirement: 2025 Guide to Digital Retirement Planning

Sam Altman Says Open AI Will Leave the EU if There’s Any Real AI Regulation

Our site/blogs may contain some affiliate/compensated links, which may earn us a small commission if you make a purchase through our links, at no extra cost to you. Learn more.

Introduction

The famous man behind Chat GPT is trying to convince the US Congress for its restrictive AI regulation, so that he could keep selling his AI models for the users.

Sam Altman blatantly insisted that Open AI Will exit the EU for good if There’s Any restrictive AI Regulation being implemented.

The mastermind behind Open AI’s whirlwind success that made Chat GPT the talk of the town, has ranted at the US Congress that he’s up for all AI regulation, as long as he keeps selling his AI models.

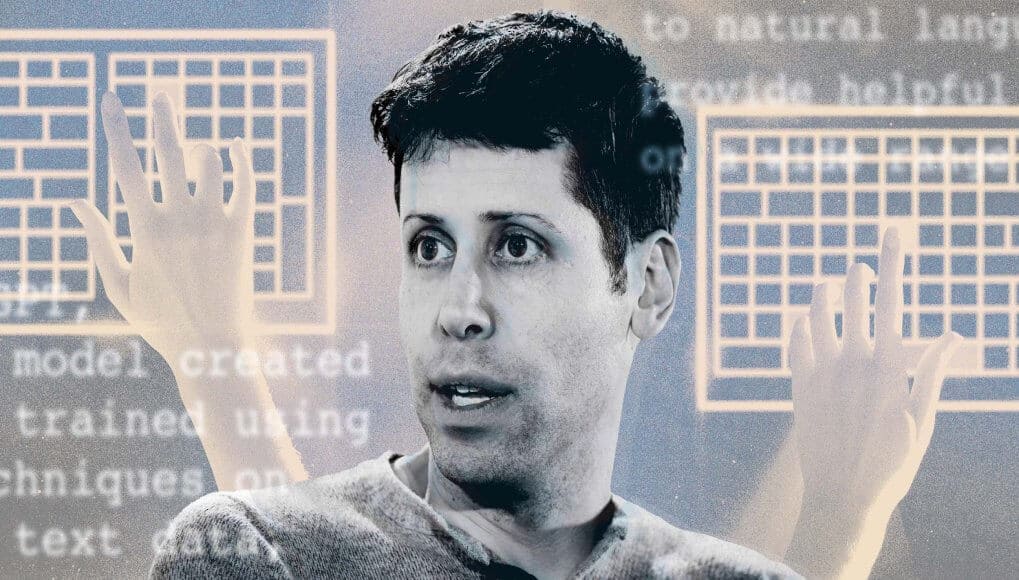

Sam Altman driving the AI pitch

Image Source: BBC

Samuel Altman, the famed founder and CEO of Open AI, arrived for his exclusive testimony at the Senate Judiciary Subcommittee on Privacy, Technology, and the Law in the morning hours of May 16, 2023 in Washington, DC.

Open AI CEO Sam Altman is on an extensive tour leveraging Open AI with its AI-friendly regulation.

Altman Tour

Image Source: Digital Journal

Open AI CEO Sam Altman’s international offensive charm is fueling the AI hype trend he had started with his recent jibes at the U.S. Congress.

In the AI-friendly U.SA has forewarned to take back his tech toys to the other end of the world if they’re not agree to cooperate by his rules.

Chat GPT’s Creator is trying hard to Buddy Up to Congress

Image Source: Forbes

Altman is going from Lagos to throughout Europe.

Arriving in In London, UK from dodging protestors to engaging with big tech giants, businesses, and policy makers about his AI models, he is set doing well. His primary pitch being his language model powered Chat GPT and a campaign for all pro-AI regulatory policies.

During the panel discussion held by the University College London, Altman discontentedly stated “going to try to comply” with EU regulations and the AI act, he was certainly not pleased the European body had defined “high-risk” systems.

The EU Regulations

Image Source: National Herald

The EU’s AI Act came into existence by the governing body in 2021, classifying AI into three risk categories. With some AI positioned as “unacceptable risk” like social scoring systems, manipulative social engineering AI, or anything “violating fundamental rights.”

On the other end, a “high-risk AI system” would be categorized on its intended use, complying within the across-the-board standards for data transparency and oversight.

Altman worries that the AI laws being currently drafted, could flag both Chat GPT and the large language model GPT-4 to be categorized as high-risk.

Requiring the company for compliance within the regulated requirements.

The Open AI CEO quite frenziedly quoted “If we can comply, we will, and if we cannot, we will cease operating…

We will try. But there are technical limits to what is possible. Unquote.

The AI regulations

Image Source: IEEE Innovation at work

The laws were initially designed for combatting potentially hazardous AI uses like China’s social credit system and face recognition. With Open AI and fellow startups in the AI field, onboarding with diffusive AI image generation followed by the overnight sensational language model-based Chat GPT.

Made the EU draft newer provisions to its law last December imposing added safety checks and risk management on “foundational models” like LLMs running AI chatbots. The European Parliament committee recently approved these changes early this month.

The EU had openly scrutinized Open AI much more than the U.S.

The EU’s European Data Protection Board has been monitoring Chat GPT in its radar to make sure it is following its privacy laws.

However, the AI Act is malleable and not etched in stone, with the possibility of a change in narrative, the reason for Altman’s recent world tour.

Altman Addressal

Image Source: NBC News

Altman reiterated some of the similar talking points he had blurted out at Congress last week.

Altman had agreeably supported the idea of being unsure about the latent dangers present within AI, but added to the fact that there might be many potentially useful benefits to it as well.

The Open AI CEO cleared the air to the U.S. lawmakers that he was all game for regulations including the brand-new safety requirements by a governing agency to test products for ensuring its regulatory compliance.

He cryptically stated, “between the traditional European approach and the traditional U.S. approach,” that is to be taken with a pinch of salt.

Concluding Thoughts

Altman had earlier exclaimed that he did not agree at all with any regulation that limited users’ access to his tech.

He addressed his London audience saying he did not however support anything that could potentially hurt small companies or halt the open-source AI movement (on a side note, Open AI has closed itself off as a company citing the reason being “competition”).

However, keeping in mind any new regulation will be inherently benefiting Open AI, allowing it to point its finger at the law if things went horribly downhill.

The newer compliance checks will make designing AI models much more expensive, giving the company an added advantage in the ongoing AI race for glory.